As interactive media services evolve with the internet, there is a growing demand for applications where users can actively control their perspectives and experiences. To support such interactivity, not only audiovisual content but also contextual information such as “when,” “where,” and “how” the content was recorded becomes crucial. In response, we have designed the Software Defined Media (SDM) Ontology to comprehensively describe spatio-temporal media and enable its structured use.

The SDM Ontology provides a flexible vocabulary for describing video, audio, and sensor data—along with recording equipment, processing systems, and associated semantic metadata. During classical and jazz concert recordings, we collected multi-angle videos and multi-channel audio, annotated them using the SDM Ontology, and published them as Linked Open Data (LOD). This approach allows not only performers or creators but also third-party developers to reuse and reinterpret the data across various applications.

A prominent application built on this ontology is “Web360²,” a browser-based interactive viewer. It dynamically retrieves 360-degree videos and spatial audio through SPARQL queries to the published LOD and lets users experience events from arbitrary viewpoints and listening positions. User studies confirmed that the application offers intuitive operation and immersive engagement, making it promising for education, arts, and entertainment.

This research contributes a foundational structure for enabling richly contextualized and extensible media experiences. Looking forward, we envision SDM Ontology playing a key role in AI-assisted content understanding, urban data archiving, and bridging physical and virtual environments in the era of the metaverse.

@article{Sone2023,

title = {An Ontology for Spatio-Temporal Media Management and an Interactive Application},

author = {Takuro Sone and Shin Kato and Ray Atarashi and Jin Nakazato and Manabu Tsukada and Hiroshi Esaki},

url = {https://github.com/sdm-wg/web360square-vue

https://tlab.hongo.wide.ad.jp/sdmo/},

doi = {10.3390/fi15070225},

issn = {1999-5903},

year = {2023},

date = {2023-06-23},

urldate = {2023-06-23},

journal = {Future Internet},

volume = {15},

number = {225},

issue = {7},

abstract = {In addition to traditional viewing media, metadata that record the physical space from multiple perspectives will become extremely important in realizing interactive applications such as Virtual Reality(VR), Augmented Reality(AR). This paper proposes the Software Defined Media (SDM) Ontology designed to describe spatio-temporal media and the systems that handle them comprehensively. Spatio-temporal media refers to video, audio, and various sensor values recorded together with time and location information. The SDM Ontology can flexibly and precisely represent spatio-temporal media, equipment, and functions that record, process, edit, and play them and related semantic information. In addition, we recorded classical and jazz concerts using many video cameras and audio microphones, and then processed and edited the video and audio data with related metadata. Then, we created a dataset using the SDM Ontology and published it as linked open data(LOD). Furthermore, we developed "Web360^2" an application that enables users to interactively view and experience 360-degree video and spatial acoustic sounds by referring to this dataset. We conducted a subjective evaluation by using a user questionnaire. Web360^2 is a data-driven web application that obtains video and audio data and related metadata by querying the Dataset.

},

keywords = {},

pubstate = {published},

tppubtype = {article}

}

@workshop{Atarashi2018,

title = {The Software Defined Media Ontology for Music Events},

author = {Ray Atarashi and Takuro Sone and Yu Komohara and Manabu Tsukada and Takashi Kasuya and Hiraku Okumura and Masahiro Ikeda and Hiroshi Esaki},

url = {https://hal.archives-ouvertes.fr/hal-01879099/document?.pdf},

doi = {10.1145/3243907.3243915},

year = {2018},

date = {2018-10-08},

urldate = {2018-10-08},

booktitle = {Workshop on Semantic Applications for Audio and Music (SAAM) held in conjunction with ISWC 2018},

pages = {15-23},

address = {Monterey, California, USA.},

abstract = {With the advent of viewing services based on the Internet, the importance of object-based viewing services for interpreting objects existing in space and utilizing them as the content is increasing. Since 2014, the Software Defined Media Consortium has been researching object-based media and Internet-based viewing spaces. This paper defines a framework in event participants and professional recorders each freely share recorded data, and a third party can create an application based on the data. This study aims to provide an SDM ontology-based contents management mechanism with a detailed description of the object-based audio and video data and the recording environment. The data can be shared via the Internet and is highly reusable. We implemented this management mechanism and have developed and validated applications that are capable of interactively playing 3D content from any viewpoints freely.},

keywords = {},

pubstate = {published},

tppubtype = {workshop}

}

@conference{菰原裕2017,

title = {SDM Ontology: Software Defined Mediaのメタデータ管理のためのOntology},

author = {菰原裕 and 塚田学 and 江崎浩 and 曽根卓朗 and 池田雅弘 and 高坂茂樹 and 新麗 and 新善文},

url = {http://tlab.hongo.wide.ad.jp/wp-content/uploads/2019/01/68C32929-95F4-42C0-81C6-8BB4D7E64D8E-2.pdf},

year = {2017},

date = {2017-06-20},

urldate = {2017-06-20},

booktitle = {マルチメディア,分散,協調とモバイル(DICOMO2017)シンポジウム},

address = {北海道札幌市},

abstract = {インターネットが広く普及し,全天球カメラのような新しい記録デバイスも登場している現代において,インターネットを前提としたオーディオビジュアルシステムの需要が高まっている.そのような状況において,Software Defined Media(SDM)コンソーシアムでは,オブジェクトベースのメディアとインターネットを前提とした環境における視聴空間の制御を目的として研究を進めている.本研究では,SDMが収録を行なったクラシックコンサートのデータをLinked Open Data(LOD)として保存・公開する為に"SDM Ontology"を作成した.その際,SDMのデータを適切に表現出来るようにクラスの構造やプロパティの種類を議論し,構築を行なった.},

keywords = {},

pubstate = {published},

tppubtype = {conference}

}

autonomous driving machine learning

machine learning uav

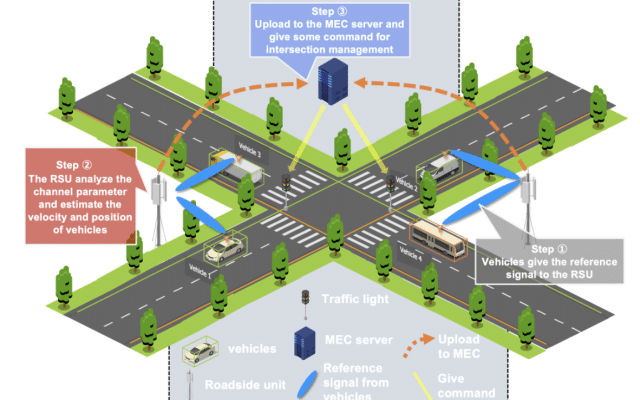

autonomous driving v2x

v2x

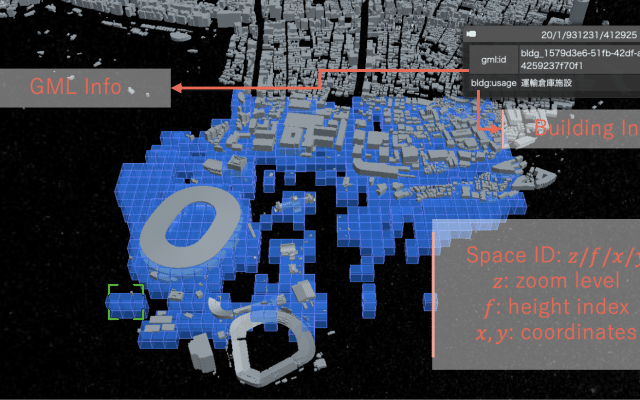

digital twins extended reality

digital twins uav

autonomous driving machine learning

machine learning v2x

We are part of the University of Tokyo’s Graduate School of Information Science and Technology, Department of Creative Informatics and focuses on computer networks and cyber-physical systems

Address

4F, I-REF building, Graduate School of Information Science and Technology, The University of Tokyo, 1-1-1, Yayoi, Bunkyo-ku, Tokyo, 113-8657 Japan

Room 91B1, Bld 2 of Engineering Department, The University of Tokyo, 7-3-1 Hongo, Bunkyo-ku, Tokyo 113-8656, Japan

Mail: