As interest in immersive spatial experiences continues to grow, Tsukada Lab investigates how digital‑twin technology can be leveraged to enable accurate, real‑time 3D audio environments for mixed‑reality applications. This research focuses on cloud-assisted acoustic simulation informed by either large-scale urban geometric models or BIM data, listener positioning, and network-based rendering infrastructure. The goal is to render dynamic spatial audio that behaves realistically with respect to complex geometries and listener movement.

The platform architecture combines large-scale 3D city models or BIM data with RTK-GNSS or SLAM-based tracking systems to determine the listener’s precise location and orientation. This data is fed into spatialization engines that simulate acoustics in real time. Leveraging parallelized server compute for wave-based audio propagation, the system generates soundscapes that reflect environmental features such as occlusion, diffraction, and reverberation. The listener experiences a rich and reactive audio environment that evolves with their movement in physical space.

Several prototypes and field deployments have demonstrated the feasibility of this approach. These include mobile audio visualization systems using smartphones and AR frameworks, remote-rendered immersive urban soundscapes, and real-time synchronization with building-scale acoustic simulations. These implementations validate the technical architecture while providing data to assess simulation accuracy across different environments.

By integrating geospatial infrastructure, embedded sensing, and audio rendering pipelines, the project advances the state of the art in spatial computing and urban informatics. It lays the foundation for a programmable audio infrastructure that can enhance urban navigation, cultural experiences, and situational awareness through immersive, context-aware sound design.

@inproceedings{Orsholits2024b,

title = {PLATONE: Assessing Simulation Accuracy of Environment-Dependent Audio Spatialization},

author = {Alex Orsholits and Eric Nardini and Tsukada Manabu},

doi = {10.1145/3732437.3732746},

year = {2024},

date = {2024-11-28},

urldate = {2024-11-28},

booktitle = {International Conference on Intelligent Computing and its Emerging Applications (ICEA2024)},

pages = {57 - 62},

address = {Tokyo, Japan},

abstract = {As the convergence of physical and virtual spaces gains importance in the Internet of Realities (IoR), accurate spatial audio is critical for enhancing immersive urban experiences. In previous work, PLATONE [2] introduced a platform for city-scale, environment-dependent audio spatialization using 3D georeferenced data. Expanding on this foundation, this paper presents a preliminary assessment of PLATONE’s audio simulation accuracy in Montreux, Switzerland, utilizing 3D building and topographic data from the Swiss Federal Office of Topography (swisstopo). By comparing real-world audio propagation—captured in-situ using high-resolution microphones—with simulated audio rendered via PLATONE, this study aims to evaluate the platform’s precision in a new urban context. The findings highlight the impact of environmental factors such as topography and building geometry on the spatialization model, providing insights for improving large-scale immersive audio systems.

},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

@inproceedings{Orsholits2024,

title = {PLATONE: An Immersive Geospatial Audio Spatialization Platform},

author = {Alex Orsholits and Yiyuan Qian and Eric Nardini and Yusuke Obuchi and Manabu Tsukada},

doi = {10.1109/MetaCom62920.2024.00020},

year = {2024},

date = {2024-08-12},

urldate = {2024-08-12},

booktitle = {The 2nd Annual IEEE International Conference on Metaverse Computing, Networking, and Applications (MetaCom 2024)},

address = {Hong Kong, China},

abstract = {In the rapidly evolving landscape of mixed reality (MR) and spatial computing, the convergence of physical and virtual spaces is becoming increasingly crucial for enabling immersive, large-scale user experiences and shaping inter-reality dynamics. This is particularly significant for immersive audio at city-scale, where the 3D geometry of the environment must be considered, as it drastically influences how sound is perceived by the listener. This paper introduces PLATONE, a novel proof-of-concept MR platform designed to augment urban contexts with environment-dependent spatialized audio. It leverages custom hardware for localization and orientation, alongside a cloud-based pipeline for generating real-time binaural audio. By utilizing open-source 3D building datasets, sound propagation effects such as occlusion, reverberation, and diffraction are accurately simulated. We believe that this work may serve as a compelling foundation for further research and development.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

@inproceedings{Takada2024,

title = {Design of Digital Twin Architecture for 3D Audio Visualization in AR},

author = {Tokio Takada and Jin Nakazato and Alex Orsholits and Manabu Tsukada and Hideya Ochiai and Hiroshi Esaki},

doi = {10.1109/MetaCom62920.2024.00044},

year = {2024},

date = {2024-08-12},

urldate = {2024-08-12},

booktitle = {The 2nd Annual IEEE International Conference on Metaverse Computing, Networking, and Applications (MetaCom 2024)},

address = {Hong Kong, China},

abstract = {Digital twins have recently attracted attention from academia and industry as a technology connecting physical space and cyberspace. Digital twins are compatible with Augmented Reality (AR) and Virtual Reality (VR), enabling us to understand information in cyberspace. In this study, we focus on music and design an architecture for a 3D representation of music using a digital twin. Specifically, we organize the requirements for a digital twin for music and design the architecture. We establish a method to perform 3D representation in cyberspace and map the recorded audio data in physical space. In this paper, we implemented the physical space representation using a smartphone as an AR device and employed a visual positioning system (VPS) for self-positioning. For evaluation, in addition to system errors in the 3D representation of audio data, we conducted a questionnaire evaluation with several users as a user study. From these results, we evaluated the effectiveness of the implemented system. At the same time, we also found issues we need to improve in the implemented system in future works.},

key = {CREST},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

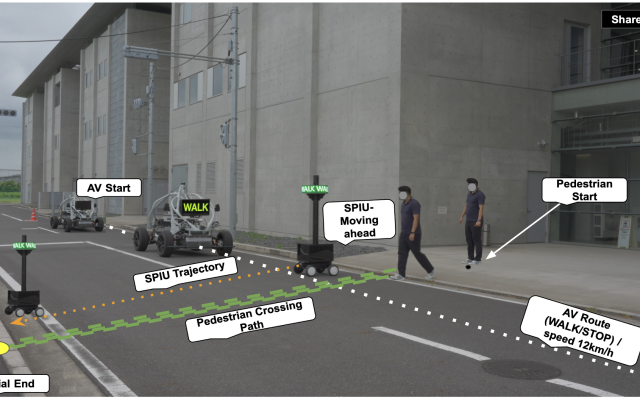

autonomous driving machine learning

machine learning uav

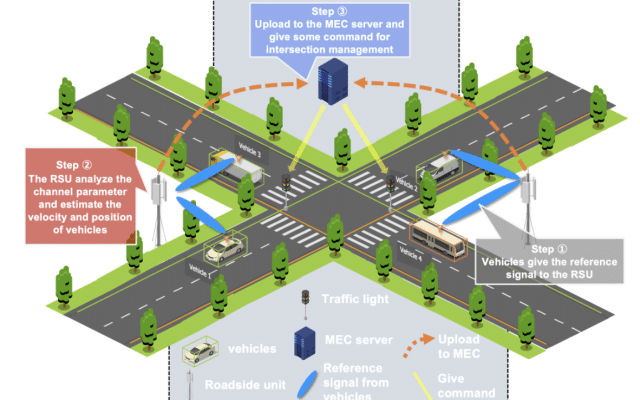

autonomous driving v2x

v2x

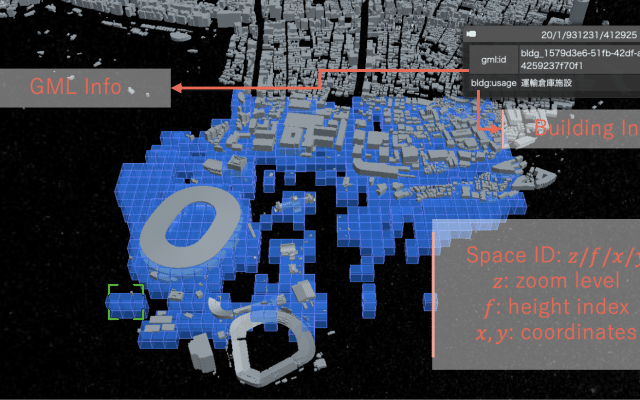

digital twins extended reality

digital twins uav

autonomous driving machine learning

machine learning v2x

We are part of the University of Tokyo’s Graduate School of Information Science and Technology, Department of Creative Informatics and focuses on computer networks and cyber-physical systems

Address

4F, I-REF building, Graduate School of Information Science and Technology, The University of Tokyo, 1-1-1, Yayoi, Bunkyo-ku, Tokyo, 113-8657 Japan

Room 91B1, Bld 2 of Engineering Department, The University of Tokyo, 7-3-1 Hongo, Bunkyo-ku, Tokyo 113-8656, Japan

Mail: