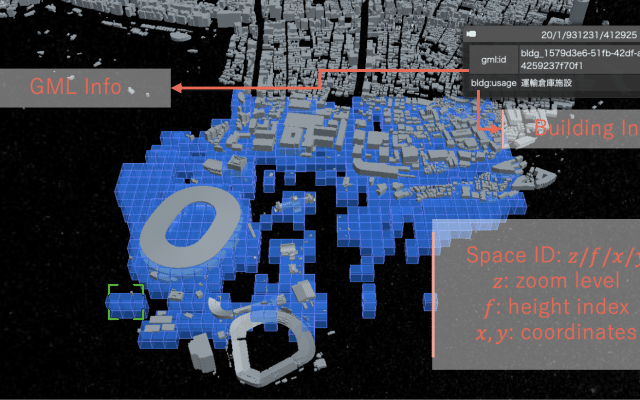

没入型の空間体験への関心が高まる中、塚田研究室では、デジタルツイン技術を活用して、正確かつリアルタイムな3D音響環境を構築するための研究を進めています。本研究では、大規模な都市ジオメトリモデルまたはBIMデータ、リスナーの位置情報、ネットワークベースのレンダリング基盤に基づいたクラウド支援型音響シミュレーションに焦点を当てています。複雑な構造物やリスナーの動きに応じてリアルに変化する空間音響の実現を目指しています。

プラットフォームは、都市スケールの3DモデルまたはBIMデータと、RTK-GNSSやSLAMベースのトラッキング技術を組み合わせて、リスナーの正確な位置と向きを取得します。このデータは空間音響エンジンに送られ、音響をリアルタイムにシミュレーションします。波動ベースの音響伝搬をサーバ上で並列計算することで、遮蔽、回折、残響といった環境要因を反映した音場を生成します。リスナーは、自身の移動に応じて変化する豊かで反応的な音響環境を体験できます。

本手法は、複数のプロトタイプや現場実証を通じて実現可能性が確認されています。これには、スマートフォンとARフレームワークによるモバイル音響ビジュアライゼーション、都市空間の遠隔音響レンダリング、建築スケールの音響シミュレーションとのリアルタイム同期などが含まれます。これらの実装により、技術基盤の検証と環境ごとのシミュレーション精度評価の両面が進められています。

地理空間インフラ、組込みセンシング、音響レンダリングのパイプラインを統合することで、本プロジェクトは空間コンピューティングおよび都市情報学の最先端を押し広げます。今後、都市ナビゲーション、文化体験、状況認識の向上など、没入的かつコンテキスト指向の音響設計を通じて新たなユーザー体験を提供するための基盤として展開されることが期待されます。

@inproceedings{Orsholits2024b,

title = {PLATONE: Assessing Simulation Accuracy of Environment-Dependent Audio Spatialization},

author = {Alex Orsholits and Eric Nardini and Tsukada Manabu},

doi = {10.1145/3732437.3732746},

year = {2024},

date = {2024-11-28},

urldate = {2024-11-28},

booktitle = {International Conference on Intelligent Computing and its Emerging Applications (ICEA2024)},

pages = {57 - 62},

address = {Tokyo, Japan},

abstract = {As the convergence of physical and virtual spaces gains importance in the Internet of Realities (IoR), accurate spatial audio is critical for enhancing immersive urban experiences. In previous work, PLATONE [2] introduced a platform for city-scale, environment-dependent audio spatialization using 3D georeferenced data. Expanding on this foundation, this paper presents a preliminary assessment of PLATONE’s audio simulation accuracy in Montreux, Switzerland, utilizing 3D building and topographic data from the Swiss Federal Office of Topography (swisstopo). By comparing real-world audio propagation—captured in-situ using high-resolution microphones—with simulated audio rendered via PLATONE, this study aims to evaluate the platform’s precision in a new urban context. The findings highlight the impact of environmental factors such as topography and building geometry on the spatialization model, providing insights for improving large-scale immersive audio systems.

},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

@inproceedings{Orsholits2024,

title = {PLATONE: An Immersive Geospatial Audio Spatialization Platform},

author = {Alex Orsholits and Yiyuan Qian and Eric Nardini and Yusuke Obuchi and Manabu Tsukada},

doi = {10.1109/MetaCom62920.2024.00020},

year = {2024},

date = {2024-08-12},

urldate = {2024-08-12},

booktitle = {The 2nd Annual IEEE International Conference on Metaverse Computing, Networking, and Applications (MetaCom 2024)},

address = {Hong Kong, China},

abstract = {In the rapidly evolving landscape of mixed reality (MR) and spatial computing, the convergence of physical and virtual spaces is becoming increasingly crucial for enabling immersive, large-scale user experiences and shaping inter-reality dynamics. This is particularly significant for immersive audio at city-scale, where the 3D geometry of the environment must be considered, as it drastically influences how sound is perceived by the listener. This paper introduces PLATONE, a novel proof-of-concept MR platform designed to augment urban contexts with environment-dependent spatialized audio. It leverages custom hardware for localization and orientation, alongside a cloud-based pipeline for generating real-time binaural audio. By utilizing open-source 3D building datasets, sound propagation effects such as occlusion, reverberation, and diffraction are accurately simulated. We believe that this work may serve as a compelling foundation for further research and development.},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

@inproceedings{Takada2024,

title = {Design of Digital Twin Architecture for 3D Audio Visualization in AR},

author = {Tokio Takada and Jin Nakazato and Alex Orsholits and Manabu Tsukada and Hideya Ochiai and Hiroshi Esaki},

doi = {10.1109/MetaCom62920.2024.00044},

year = {2024},

date = {2024-08-12},

urldate = {2024-08-12},

booktitle = {The 2nd Annual IEEE International Conference on Metaverse Computing, Networking, and Applications (MetaCom 2024)},

address = {Hong Kong, China},

abstract = {Digital twins have recently attracted attention from academia and industry as a technology connecting physical space and cyberspace. Digital twins are compatible with Augmented Reality (AR) and Virtual Reality (VR), enabling us to understand information in cyberspace. In this study, we focus on music and design an architecture for a 3D representation of music using a digital twin. Specifically, we organize the requirements for a digital twin for music and design the architecture. We establish a method to perform 3D representation in cyberspace and map the recorded audio data in physical space. In this paper, we implemented the physical space representation using a smartphone as an AR device and employed a visual positioning system (VPS) for self-positioning. For evaluation, in addition to system errors in the 3D representation of audio data, we conducted a questionnaire evaluation with several users as a user study. From these results, we evaluated the effectiveness of the implemented system. At the same time, we also found issues we need to improve in the implemented system in future works.},

key = {CREST},

keywords = {},

pubstate = {published},

tppubtype = {inproceedings}

}

autonomous driving machine learning

machine learning uav

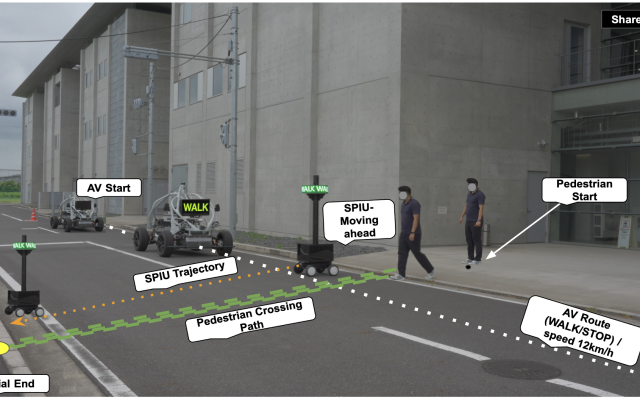

autonomous driving v2x

digital twins extended reality

digital twins uav

autonomous driving machine learning

machine learning v2x